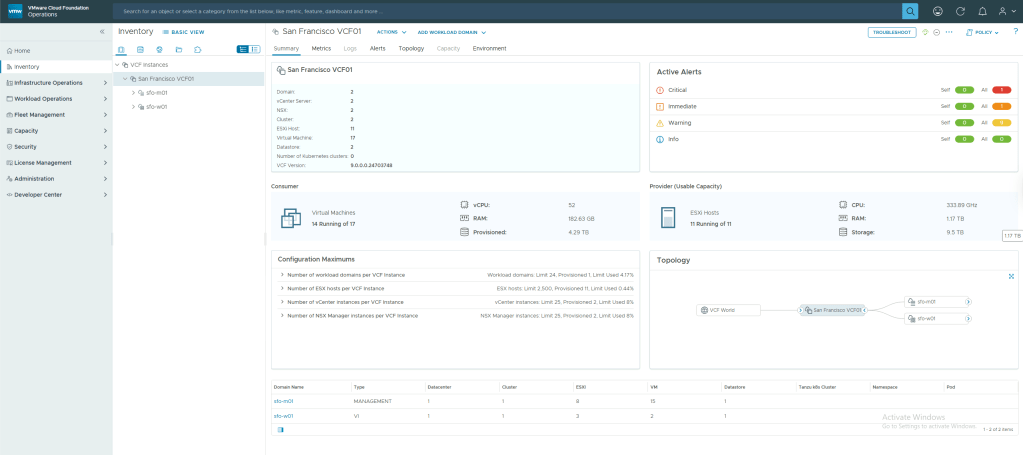

VCF 9.0 introduced the concept of VCF fleet, which is defined as:

An environment that is managed by a single set of fleet-level management components – VCF Operations & VCF Automation. A VCF fleet contains one or more VCF Instances and may contain one or more standalone vCenter instances, managed by the VCF Operations instance for the fleet. The management domain of the first VCF Instance in the VCF fleet typically hosts the fleet-level management components.

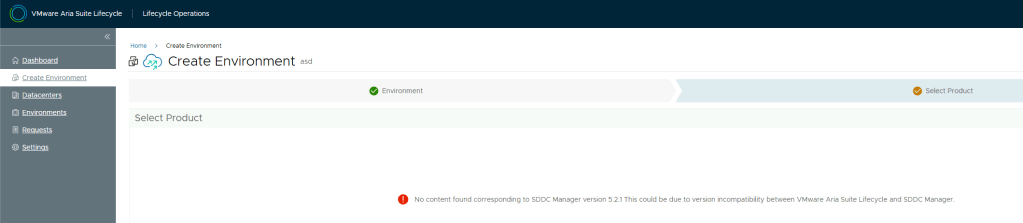

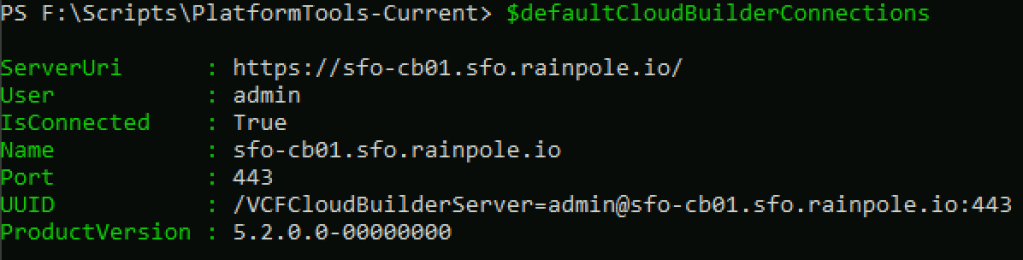

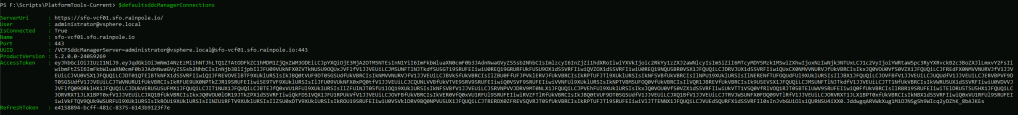

When deploying a new VCF fleet, you get the option to deploy the fleet-level management components using the VCF installer. Because I am upgrading from VCF 5.2, where I did not have Aria Operations or Aria Automation, I need to deploy new instances of each component (If I had pre-existing instances, they could be upgraded). You can deploy them manually from OVA, however, there is a new SDDC Manager API to automate the process using a JSON payload.

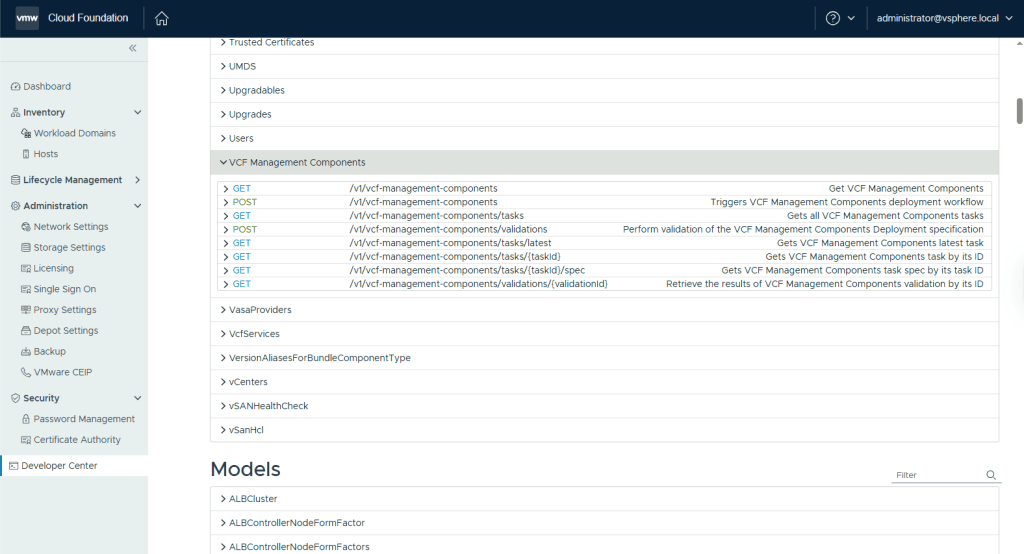

The API can be accessed via the SDDC Manager developer centre, under VCF Management Components.

The JSON payload to deploy VCF Operations (including a collector & the fleet management appliance) and VCF Automation is as follows: (NOTE: This spec is for a simple/single node deployment of the fleet management components where VCF Operations & VCF Automation will be deployed to an NSX Overlay segment, and the VCF Operations collector will be deployed to the management DVPG)

{

"vcfOperationsFleetManagementSpec": {

"hostname": "flt-fm01.rainpole.io",

"rootUserPassword": "VMw@re1!VMw@re1!",

"adminUserPassword": "VMw@re1!VMw@re1!",

"useExistingDeployment": false

},

"vcfOperationsSpec": {

"nodes": [

{

"hostname": "flt-ops01a.rainpole.io",

"rootUserPassword": "VMw@re1!VMw@re1!",

"type": "master"

}

],

"useExistingDeployment": false,

"applianceSize": "medium",

"adminUserPassword": "VMw@re1!VMw@re1!"

},

"vcfOperationsCollectorSpec": {

"hostname": "sfo-opsc01.sfo.rainpole.io",

"rootUserPassword": "VMw@re1!VMw@re1!",

"applianceSize": "small"

},

"vcfAutomationSpec": {

"hostname": "flt-auto01.rainpole.io",

"adminUserPassword": "VMw@re1!VMw@re1!",

"useExistingDeployment": false,

"ipPool": [

"192.168.11.51",

"192.168.11.52"

],

"internalClusterCidr": "250.0.0.0/15",

"vmNamePrefix": "flt-auto01"

},

"vcfInstanceName": "San Francisco VCF01",

"vcfMangementComponentsInfrastructureSpec": {

"localRegionNetwork": {

"networkName": "sfo-m01-cl01-vds01-pg-vm-mgmt",

"subnetMask": "255.255.255.0",

"gateway": "10.11.10.1"

},

"xRegionNetwork": {

"networkName": "xint-m01-seg01",

"subnetMask": "255.255.255.0",

"gateway": "192.168.11.1"

}

}

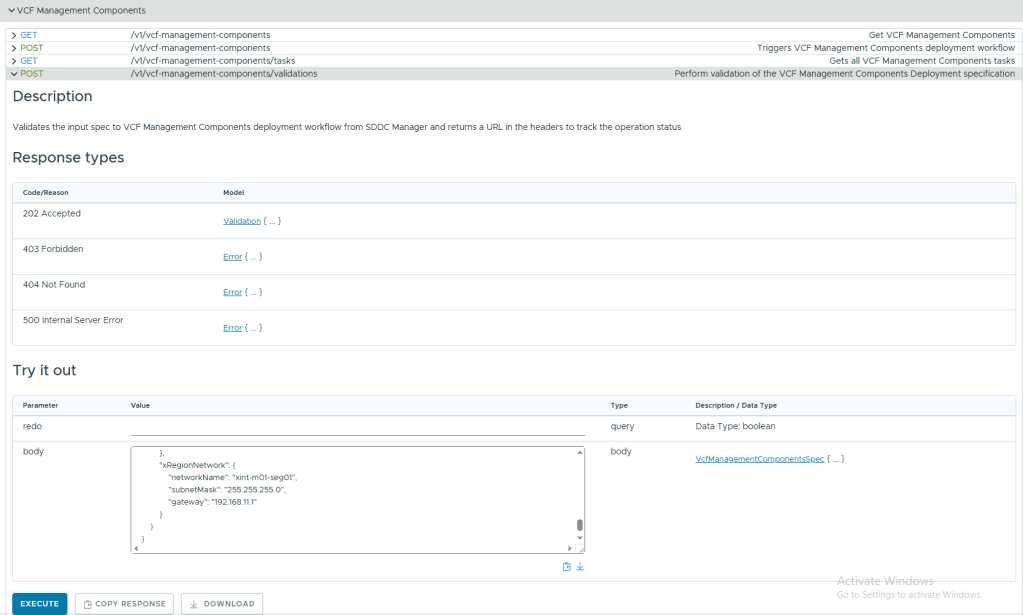

}Validate your JSON payload using the POST /v1/vcf-management-components/validations API.

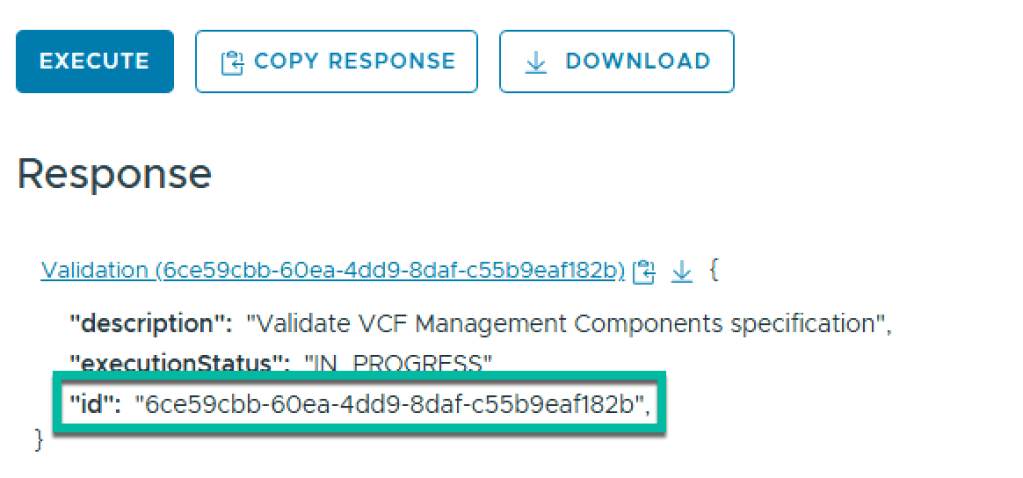

Executing this will return a task id. Copy this id to monitor the task

Check the status of the validation task using GET /v1/vcf-management-components/validations/{validationId} until it’s resultStatus is SUCCEEDED.

Now, submit the same JSON payload to POST /v1/vcf-management-components, and go grab a coffee!

Once the deployment completes, you should have a VCF Operations instance to manage your fleet, along with a VCF Automation instance for the consumption layer.